ChatGPT and Cybersecurity: Friend or Foe? (2026 Edition)

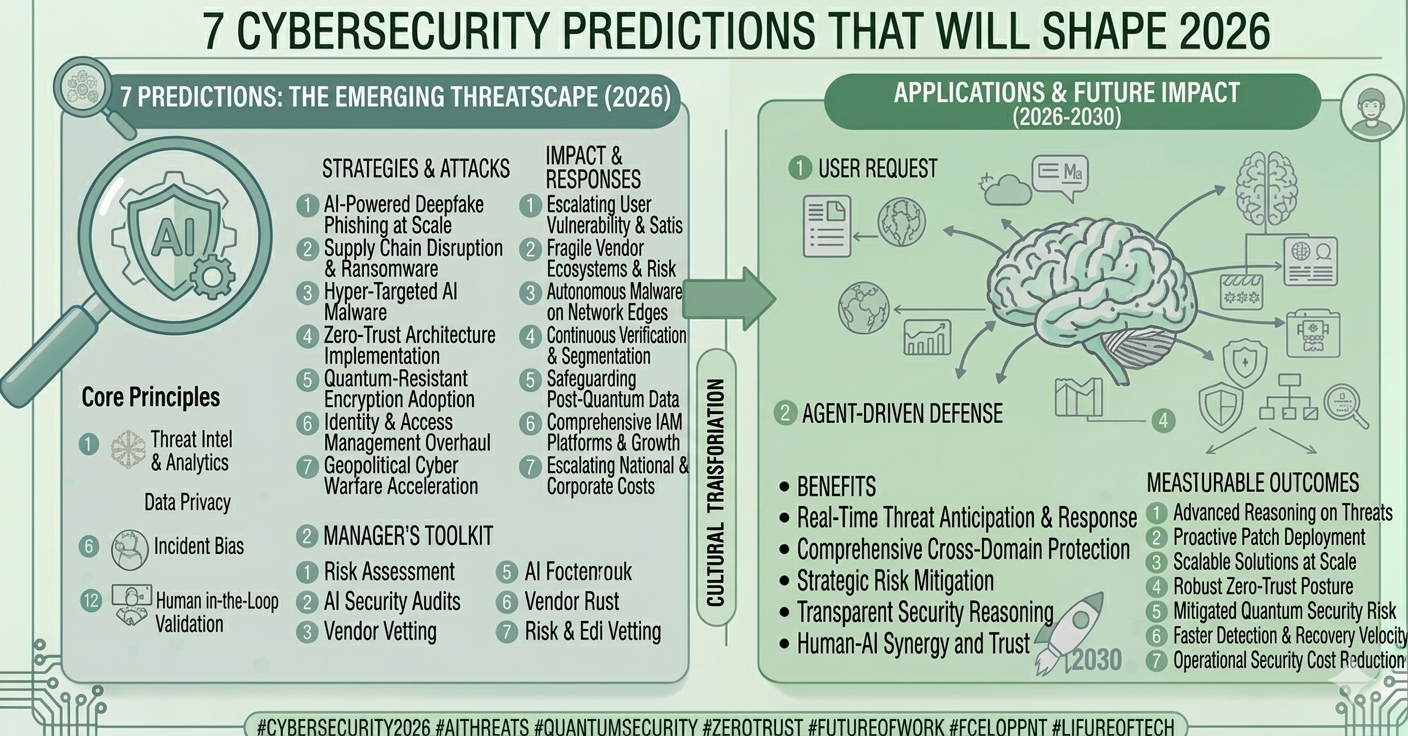

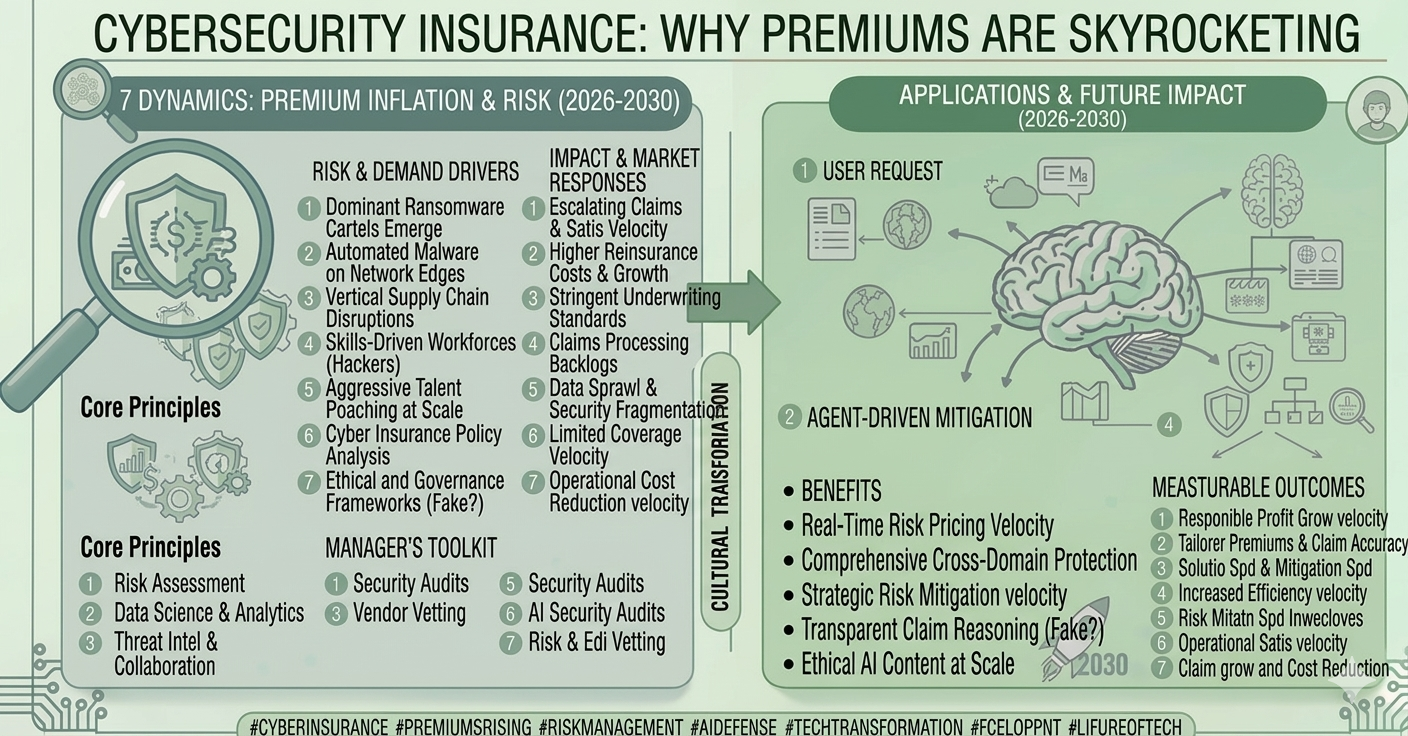

As we move through 2026, the data is clear: AI is the defining force in the global cybersecurity arms race. According to recent reports, over 90% of security leaders are concerned about AI-powered threats, yet nearly 70% see AI as the only way to win.

ChatGPT sits at the heart of this paradox. It is a tool of immense power, and like any tool, its impact depends entirely on whose hands are on the keyboard.

The "Foe": How Hackers Weaponized ChatGPT

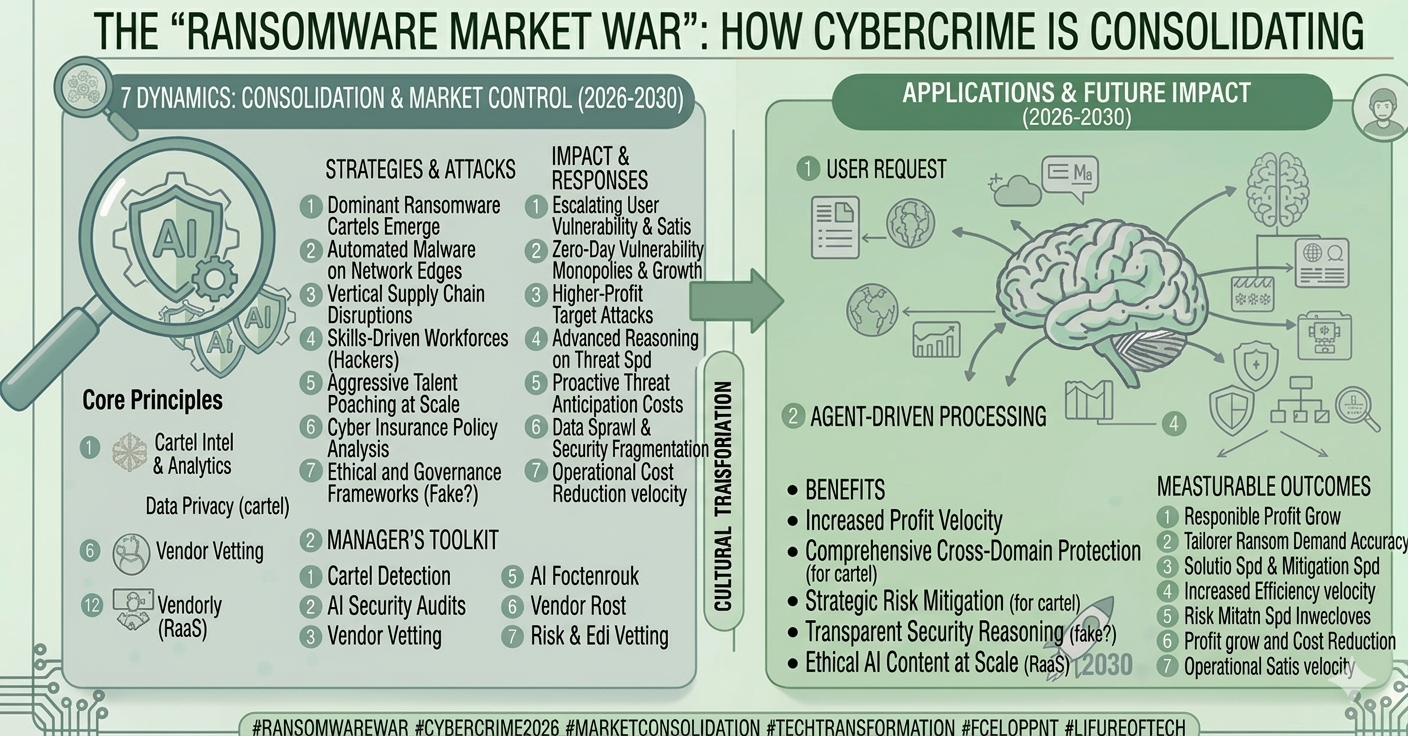

In the early days, "jailbreaking" ChatGPT was a hobby; in 2026, it is a professionalized industry. Hackers are using LLMs to collapse the "attack lifecycle" from days to mere minutes.

1. The Death of the "Obvious" Phishing Email

Remember when phishing emails were easy to spot due to bad grammar? Those days are gone. ChatGPT allows non-native speakers to craft grammatically perfect, culturally nuanced emails. In 2026, AI-generated phishing has a 40% higher engagement rate than traditional methods because it mimics the specific "vibe" and terminology of your company perfectly.

2. Polymorphic Malware

Advanced attackers use AI to generate polymorphic code—malware that constantly tweaks its own signature to evade traditional antivirus scanners. By the time a security tool recognizes a threat, the AI has already generated a "new version" that looks completely different to the system.

3. Automated Reconnaissance

Hackers use ChatGPT to scan public data, LinkedIn profiles, and leaked databases to build "targets of interest" in seconds. It can summarize a CEO’s speaking style or a company’s recent project history to make a Business Email Compromise (BEC) attack feel incredibly authentic.

The "Friend": How Defenders Are Fighting Back

It's not all bad news. Cybersecurity professionals are using ChatGPT as a "force multiplier" to outpace the bad guys.

1. The 2-Second Incident Response

In 2026, "Mean Time to Detect" (MTTD) is the most important metric. Security analysts now paste obfuscated code or suspicious logs into AI models to de-code malicious intent instantly. What used to take a human analyst hours of manual research now takes seconds, allowing teams to contain breaches 108 days faster than those without AI.

2. Writing "Defense-Grade" Code

Defenders use ChatGPT to write complex SIEM rules (like Splunk or KQL) and automated scripts for "threat hunting." Instead of building every defense from scratch, analysts describe the behavior they want to catch in plain English, and the AI generates the technical query.

3. Solving the Talent Gap

With a global shortage of millions of cyber professionals, ChatGPT acts as a virtual mentor. Junior analysts use it to explain complex vulnerabilities like "XDR" or "Zero Trust" in simple terms, allowing them to handle higher-level tasks much earlier in their careers.

The 2026 Reality Check: A Double-Edged Sword

| Capability | As a "Foe" (Attacker) | As a "Friend" (Defender) |

| Phishing | Creating hyper-realistic, mass-scale lures. | Generating high-quality "Phishing Simulations" for training. |

| Code | Generating exploits and evasive malware. | Scanning for vulnerabilities and writing patches. |

| Research | Finding "cracks" in a company's public profile. | Summarizing dark web threat intelligence in real-time. |

| Speed | 192x faster than human-led attacks. | 50% reduction in manual SOC triage workload. |

Verdict: Friend or Foe?

In 2026, ChatGPT is both. However, the "winner" of this race is whoever adopts the technology faster. Organizations that ignore AI security are essentially bringing a knife to a laser-gun fight.

The true "foe" isn't ChatGPT itself; it’s stagnation. As long as your defense is evolving as fast as the AI that threatens it, you stay in the game.

(1).png)